from lxml import etree

import pandas as pd

import requests

import random

import time

BASE_DOMAN = "https://www.dytt8.net"

HEADERS = {

"User-Agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36"

}

def get_text_html(url,HEADERS):

try:

response = requests.get(url,headers=HEADERS)

text = response.content.decode(encoding='gbk',errors='ignore') #errors 忽略错误

html = etree.HTML(text)

return html

except Exception as re:

print(re)

pass

def get_detail_urls(url):

# url = "https://www.dytt8.net/html/gndy/china/list_4_1.html"

html = get_text_html(url,HEADERS)

detail_urls = html.xpath("//table[@class='tbspan']//a/@href")

detail_urls = list(set(detail_urls))

try:

detail_urls.remove('/html/gndy/dyzz/index.html')

detail_urls.remove('/html/gndy/jddy/index.html')

except Exception as re:

pass

detail_urls = map(lambda url:BASE_DOMAN+url,detail_urls)

return detail_urls

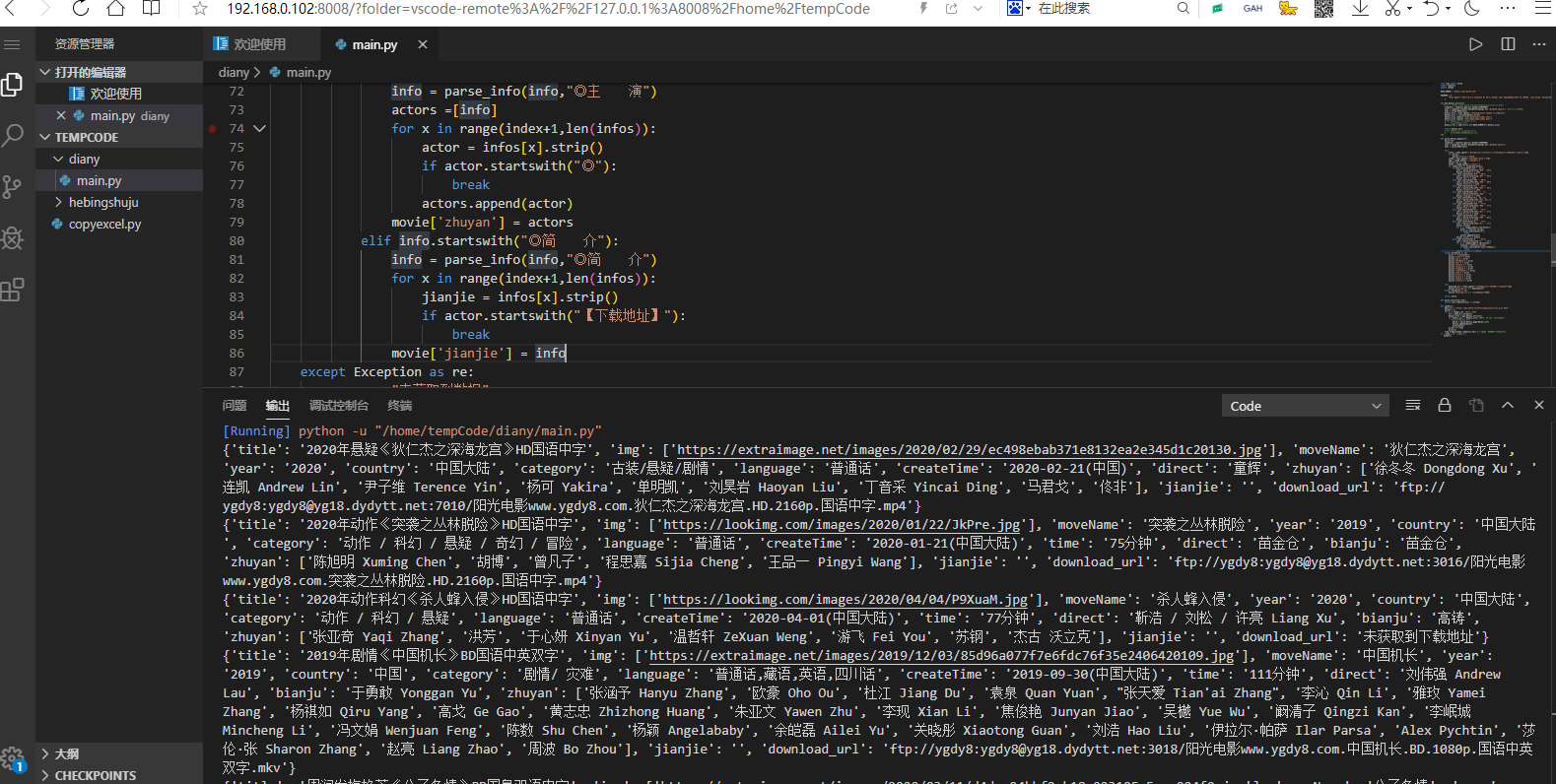

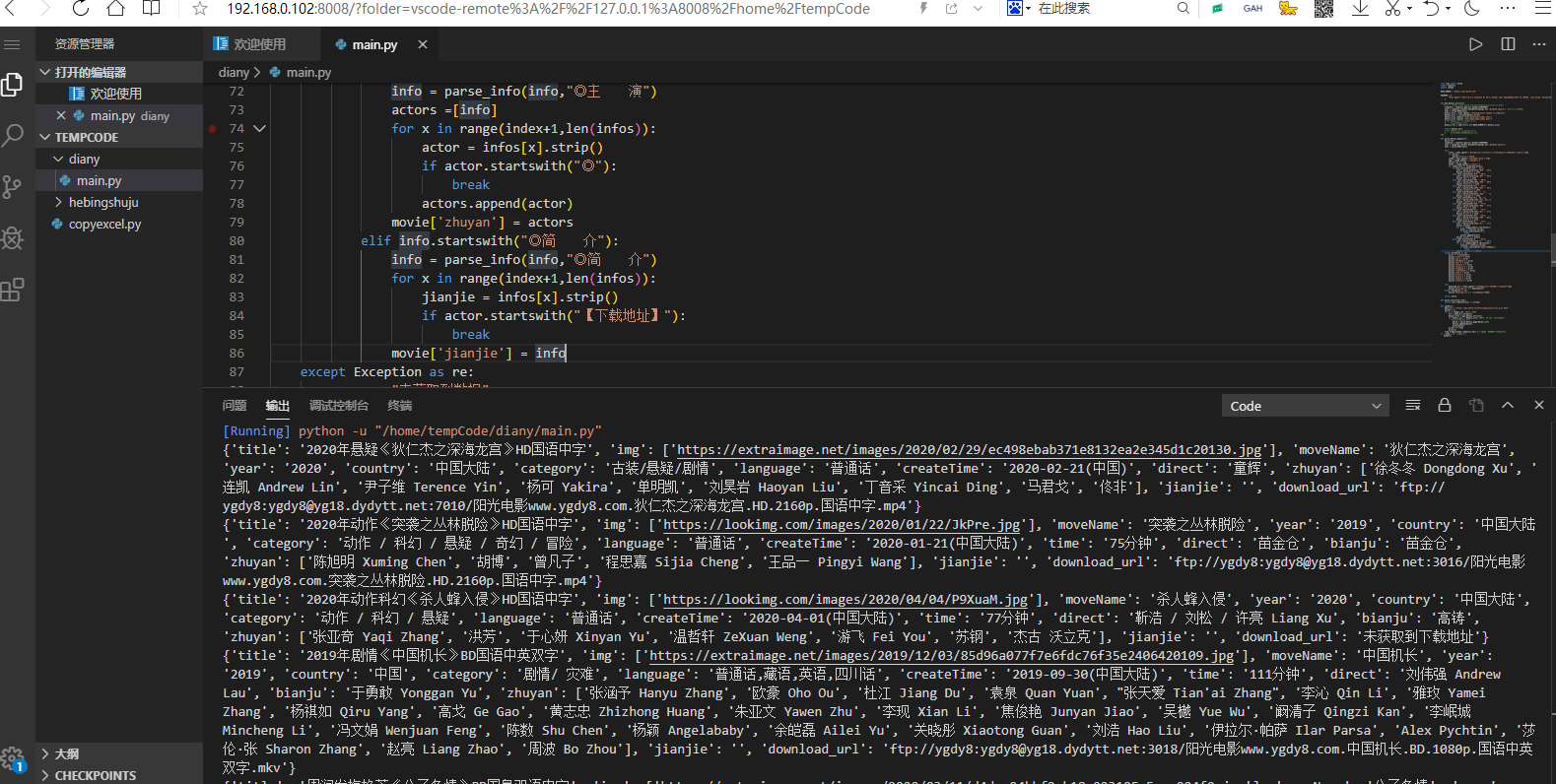

def parse_detial_page(url):

movie = {}

html = get_text_html(url,HEADERS)

try:

title = html.xpath("//div[@class='title_all']//font[@color='#07519a']/text()")[0]

if (title):

movie['title'] = title

zoomE = html.xpath("//div[@id='Zoom']")[0]

img = zoomE.xpath(".//img/@src")

movie['img'] = img

infos = zoomE.xpath(".//text()")

for index,info in enumerate(infos):

if info.startswith("◎片 名"):

info = parse_info(info,"◎片 名")

movie['moveName'] = info

elif info.startswith("◎年 代"):

info = parse_info(info,"◎年 代")

movie['year'] = info

elif info.startswith("◎产 地"):

info = parse_info(info,"◎产 地")

movie['country'] = info

elif info.startswith("◎类 别"):

info = parse_info(info,"◎类 别")

movie['category'] = info

elif info.startswith("◎语 言"):

info = parse_info(info,"◎语 言")

movie['language'] = info

elif info.startswith("◎上映日期"):

info = parse_info(info,"◎上映日期")

movie['createTime'] = info

elif info.startswith("◎片 长"):

info = parse_info(info,"◎片 长")

movie['time'] = info

elif info.startswith("◎导 演"):

info = parse_info(info,"◎导 演")

movie['direct'] = info

elif info.startswith("◎编 剧"):

info = parse_info(info,"◎编 剧")

movie['bianju'] = info

elif info.startswith("◎主 演"):

info = parse_info(info,"◎主 演")

actors =[info]

for x in range(index+1,len(infos)):

actor = infos[x].strip()

if actor.startswith("◎"):

break

actors.append(actor)

movie['zhuyan'] = actors

elif info.startswith("◎简 介"):

info = parse_info(info,"◎简 介")

for x in range(index+1,len(infos)):

jianjie = infos[x].strip()

if jianjie.startswith("◎获奖情况"):

break

elif jianjie.startswith("【下载地址】"):

break

movie['jianjie'] = jianjie

except Exception as re:

error = "未获取到数据"

movie['title'] = error

movie['img'] = error

movie['moveName'] = error

movie['year'] = error

movie['country'] = error

movie['category'] = error

movie['language'] = error

movie['createTime'] = error

movie['time'] = error

movie['direct'] = error

movie['bianju'] = error

movie['zhuyan'] = error

movie['jianjie'] = error

try:

download_url = html.xpath("//td[@bgcolor='#fdfddf']/a/@href")[0]

movie['download_url'] = download_url

except Exception as re:

movie['download_url'] = "未获取到下载地址"

return movie

def parse_info(info,rule):

return info.replace(rule,"").strip()

def spider():

base_url = "https://www.dytt8.net/html/gndy/china/list_4_{}.html"

movies = []

for x in range(1,125): #控制总页数

url = base_url.format(x)

detail_urls = get_detail_urls(url)

for detail_url in detail_urls: #遍历一页中的所有详情的url

# print(detail_url)

movie = parse_detial_page(detail_url)

movies.append(movie)

print(movie)

print("***"*10)

print(x)

time.sleep(random.randint(5,20)) #随机休眠几秒

if __name__ == "__main__":

spider()

效果: